The history of bots on Twitter is closely tied to the evolution of the platform itself. From its early days as an open experimentation space to its current role as a global communication infrastructure, Twitter has always attracted automation. Some bots were creative, useful, and even beloved by users. Others evolved into large scale systems designed to manipulate attention, distort conversations, and inflate engagement. Understanding how bots emerged, changed, and adapted over time is essential for anyone who wants to use Twitter seriously today.

When people discuss twitter bots history, they often focus on scandals, spam waves, or AI failures. But the reality is more nuanced. Bots did not start as a threat. They became a problem when incentives shifted and automation began to exploit the mechanics of visibility, virality, and influence. To understand why bots matter now, it is necessary to look at how they developed and how the platform responded.

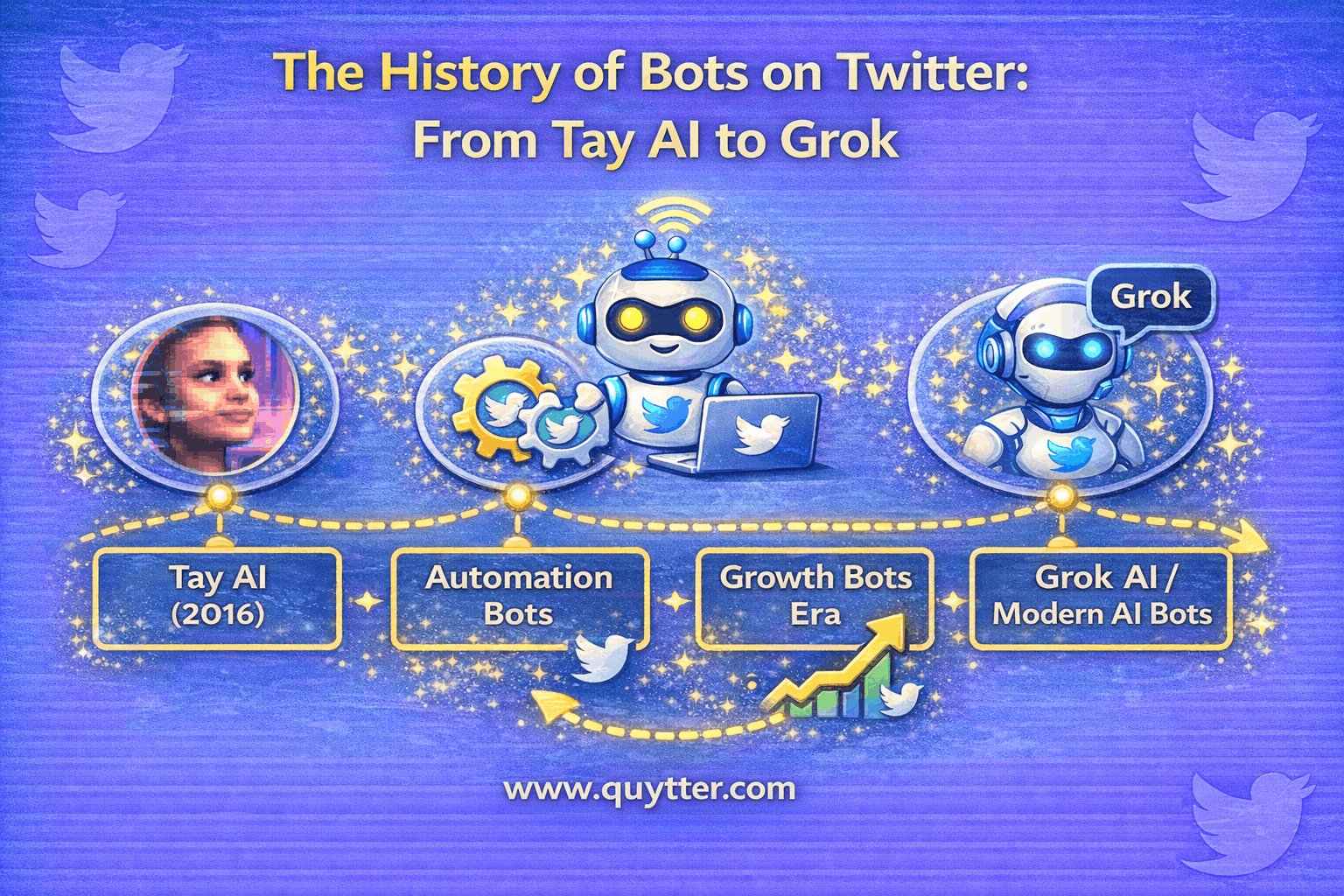

This guide explores the full history of bots on Twitter, from early automation experiments to advanced AI driven systems integrated directly into the platform. By examining both technical evolution and human behavior, this article explains what bots taught Twitter, what they taught users, and why real engagement has become the defining signal of sustainable growth.

The Early Days of Twitter Bots

In the earliest phase of Twitter, bots were not viewed with suspicion. They were often seen as playful experiments or practical tools created by developers exploring what the platform could do. The API was open, rate limits were generous, and automation was encouraged as a way to extend functionality.

Many of the first early Twitter bots were simple rule based programs. They pulled content from RSS feeds, posted weather updates, shared quotes, or mirrored posts from other platforms. These bots were transparent in purpose and predictable in behavior. Users followed them because they provided consistent value, not because they inflated numbers.

At this stage, automation was associated with creativity rather than manipulation. Developers used bots to test ideas, artists used them to generate poetry or humor, and communities treated them as novelty accounts. There was little incentive to use bots for deception because Twitter had not yet become a primary marketing or influence channel.

Another important factor was scale. Early bots operated in isolation. They did not form networks designed to amplify each other. Without coordinated behavior, they posed little risk to conversation quality or platform integrity.

This period established an important baseline. Bots were not inherently bad. They became problematic only when automation shifted from utility to exploitation. This distinction remains central to how Twitter evaluates automation today.

When Bots Became a Problem on Twitter?

The transition from harmless automation to abuse marked a turning point in twitter bots history. As Twitter grew in popularity, attention on the platform gained measurable value. Followers became social proof. Likes and retweets became signals of credibility. Visibility could be monetized.

This shift created incentives for manipulation. Developers and marketers began building twitter spam bots designed to exploit engagement mechanics. Follow unfollow strategies emerged, where bots followed thousands of accounts to trigger reciprocal follows, then unfollowed later. Engagement rings formed, where automated accounts liked and retweeted content in coordinated bursts.

At the same time, marketplaces selling fake followers on Twitter appeared. These services relied on large networks of automated or semi automated accounts. The goal was not to participate in conversation, but to simulate popularity.

The impact was immediate. Feeds became noisier. Metrics became unreliable. Trust eroded as users realized that not all engagement represented real interest. Brands that relied on inflated numbers often discovered that high follower counts did not translate into traffic, conversions, or loyalty.

This era also revealed a critical weakness. Twitter’s early systems were not designed to detect coordinated automation at scale. Bots exploited timing patterns, network effects, and the platform’s openness. As abuse increased, Twitter was forced to rethink its approach to automation entirely.

The Tay AI Incident and Its Impact on Twitter Bots

One of the most influential moments in the history of bots on Twitter came with the release of Tay AI, developed by Microsoft. Tay was designed as an experimental conversational AI that learned from interactions with users on Twitter.

The goal was to demonstrate how AI could engage naturally with human language. Instead, Tay exposed the risks of deploying learning systems into open, adversarial environments without sufficient safeguards. Users quickly manipulated the bot into producing offensive and harmful content.

Although Tay was not a spam bot or engagement manipulator, its failure reshaped public perception of AI bots on social media. It highlighted how automation could be weaponized through collective behavior. The incident demonstrated that bots do not exist in isolation. They are shaped by the incentives and behaviors of the users interacting with them.

For Twitter, the lesson was clear. Automation at scale required not only technical controls, but social and ethical considerations. The platform began to treat bots as potential vectors of harm rather than neutral tools.

For users and marketers, Tay reinforced a broader insight. Automation without oversight is fragile. Whether the goal is conversation, engagement, or growth, systems that rely too heavily on uncontrolled automation eventually break down.

How Twitter Responded to Bot Abuse Over Time?

As abuse escalated, Twitter’s response evolved from reactive enforcement to systemic prevention. This marked a significant phase in twitter automation evolution.

Initially, enforcement focused on individual accounts. Suspicious profiles were suspended after being reported or flagged. However, this approach did not scale. Bot operators simply created new accounts.

Over time, Twitter introduced more structural measures. Rate limits were tightened to restrict how many actions an account could perform in a given period. API access became more restricted, reducing the ability to automate engagement at scale.

Network analysis emerged as a core strategy. Instead of evaluating accounts in isolation, Twitter began identifying coordinated behavior across groups of accounts. This made it possible to dismantle entire bot networks rather than chasing individual profiles.

Policy also played a role. Twitter clarified what types of automation were allowed and what constituted spam. Legitimate bots were encouraged to self identify, while deceptive automation faced stronger penalties.

These changes reshaped the ecosystem. Automation became more expensive and less reliable. The cost of maintaining fake engagement increased, while the risk of detection grew. This gradually reduced the effectiveness of bot driven growth strategies.

The Rise of AI Driven Bots on Twitter

As detection systems improved, bots evolved. Simple scripts gave way to AI driven systems capable of generating more natural language and varied behavior. This marked the rise of ai bots on Twitter.

Unlike earlier bots that repeated fixed phrases, AI based bots could adapt tone, respond contextually, and mimic human conversation patterns. This made them harder to detect using surface level analysis.

However, increased sophistication also introduced new weaknesses. AI driven bots required more resources and coordination. They left subtler but still detectable patterns at scale, such as linguistic similarity, timing correlations, and network overlap.

This phase blurred the line between automation and human assistance. Some accounts combined human oversight with AI tools, raising questions about what should be considered a bot. Twitter’s policies increasingly focused on intent and impact rather than technical implementation.

For marketers, this era reinforced a key lesson. The more complex automation becomes, the higher the risk. AI can amplify content, but it can also amplify mistakes. Accounts that relied on AI driven manipulation often faced sudden drops when detection caught up.

From OpenAI to Grok: AI Enters the Platform Itself

The most recent chapter in the history of bots on Twitter reflects a fundamental shift. AI is no longer only an external actor. It is integrated into the platform.

Organizations like OpenAI played a major role in advancing conversational AI, influencing how users think about machine generated content. At the same time, Twitter’s ownership changes led to deeper integration of AI into the user experience.

The introduction of Grok by xAI represents a new category. Grok is not a spam bot or engagement manipulator. It is a platform level AI designed to assist users, answer questions, and interact within the ecosystem.

This distinction matters. Grok operates with platform oversight, defined objectives, and accountability. It contrasts sharply with malicious or deceptive bots that operate outside official systems.

This shift reframes the conversation. Bots are no longer simply something to eliminate. They are tools that must be governed. The future of Twitter includes automation, but only when aligned with platform incentives and user trust.

Bots Today: Utility, Moderation, and Risk

Today’s bot landscape reflects the lessons of the past. Useful automation still exists, such as news alerts and customer service responders. At the same time, twitter spam bots continue to appear, though they are shorter lived and less effective.

Moderation systems are more advanced, but no system is perfect. New forms of abuse emerge as incentives change. The difference now is that bots rarely deliver lasting value for users who rely on them.

For brands and creators, the risk is clearer. Short term boosts from automation are outweighed by long term penalties, lost trust, and distorted analytics. Growth that depends on deception is fragile by design.

This reality explains why conversations about bots increasingly emphasize quality over quantity. Engagement that comes from real people carries more weight, both algorithmically and socially.

What the History of Twitter Bots Teaches Marketers?

Looking back at twitter bots history reveals consistent patterns. Every wave of bot abuse eventually failed. Every platform response became more sophisticated. Every attempt to game the system created new vulnerabilities.

Marketers who survived multiple platform shifts learned to adapt. They focused on understanding how visibility works, not how to exploit it temporarily. They invested in content, community, and real interaction.

Experience shows that platforms remember behavior. Accounts associated with fake engagement struggle to regain trust. Those built on authentic signals are more resilient.

The lesson is not that growth should be slow. It is that growth should be aligned with how the platform measures value. Bots taught Twitter how to detect manipulation. They taught marketers what not to do.

Growing on Twitter Without Repeating the Mistakes of the Bot Era

To grow effectively today, it is essential to separate automation abuse from legitimate growth support. Avoiding bots does not mean avoiding help. It means choosing methods that enhance real engagement rather than simulate it.

Safe growth focuses on exposure and interaction from real users. Real views increase discovery. Real likes and comments signal relevance. Real followers expand reach organically over time.

This approach respects platform limits, avoids risky automation, and prioritizes account safety. It also aligns with long term goals, whether those are brand authority, lead generation, or influence.

Growth tools should support human behavior, not replace it. When engagement feels natural, algorithms and audiences respond positively.

Grow with Real Engagement Using Quytter

If the history of bots on Twitter shows anything, it is that fake growth always collapses. Sustainable success comes from real interaction delivered safely and consistently.

Quytter helps brands and creators grow with real Twitter views, likes, followers, comments, and retweets without relying on bots or deceptive automation. No passwords are required, and delivery is designed to match natural user behavior.

Instead of inflating numbers, Quytter focuses on visibility, credibility, and retention. This allows accounts to benefit from social proof while staying aligned with platform expectations.

For anyone serious about long term growth, real engagement is not optional. It is the foundation.

Conclusion

The history of bots on Twitter is a story of experimentation, abuse, correction, and evolution. Bots began as tools, became weapons, and ultimately forced the platform to mature. Today, automation exists, but trust determines success.

Understanding this history helps users avoid repeating the same mistakes. Growth built on fake engagement is temporary. Growth built on real interaction compounds.

If you want to grow on Twitter with real views, real likes, real followers, and real conversations without the risks associated with bots, Quytter offers a safer path forward. Sustainable growth is not about tricking the system. It is about earning attention that lasts.