Buying Twitter accounts has quietly become a common tactic across marketing, automation, crypto, and growth hacking circles. When done correctly, it can save months of account aging, unlock instant distribution, and accelerate campaign testing. But when done blindly, it becomes one of the fastest ways to burn budget, trigger mass suspensions, and poison entire automation networks. The real problem is not buying accounts itself, but failing to spot fake or botted Twitter accounts before buying. Many buyers rely on surface metrics like follower counts or account age, only to discover later that the account is already flagged, shadow-limited, or built on synthetic activity.

This guide focuses on helping buyers understand how fake and botted Twitter accounts actually behave beneath the surface. Instead of vague warnings or fear-based advice, this article breaks down real patterns, technical signals, and behavioral indicators that experienced buyers use to evaluate account quality before money changes hands. If you want to reduce risk rather than gamble, understanding these signals is non-negotiable.

Why Spotting Fake or Botted Twitter Accounts Matters Before Buying?

Buying the wrong Twitter account rarely fails slowly. In most cases, failure is immediate and expensive. Accounts that look acceptable on the surface often collapse the moment they are logged into, connected to automation tools, or used for outreach. This happens because many accounts sold on the market are already compromised long before the buyer touches them.

Fake or botted Twitter accounts introduce three core risks. The first is platform enforcement risk. Accounts built on synthetic activity patterns are often already shadow-limited or sitting inside internal trust score thresholds. Even light usage can push them over the edge into suspension. The second risk is network contamination. When low-quality accounts are connected to automation systems, proxies, or shared infrastructure, they can flag otherwise healthy accounts by association. The third risk is false performance data. Botted engagement distorts analytics, making it impossible to measure what actually works.

From an E E A T perspective, experienced operators do not ask whether an account is “real” or “fake” in a simplistic sense. They ask whether the account can safely perform its intended function without triggering enforcement. That distinction is critical. Not all automation is fatal, but unexamined automation almost always is.

Understanding Fake Accounts vs Botted Accounts vs Low-Quality Accounts

One of the biggest mistakes buyers make is treating all problematic accounts as the same. In reality, fake accounts, botted accounts, and low-quality accounts behave very differently and carry different levels of risk.

Fake Twitter accounts are typically created in bulk using scripted registration flows. They often have no real user intent behind them and are designed to exist only as numbers. These accounts usually lack organic timeline behavior, show minimal profile customization, and rely heavily on artificial followers. They are the easiest to detect and the fastest to die.

Botted Twitter accounts, on the other hand, may appear more convincing. These accounts often simulate human behavior through automation. They post, like, follow, and even reply, but do so using repetitive patterns, predictable timing, and shallow engagement logic. Many buyers mistakenly believe botted accounts are acceptable because they “look active”. In reality, these accounts often carry long-term trust damage that only surfaces later.

Then there are low-quality real accounts. These are accounts created by actual users but later abused, resold, or repurposed. They may have legitimate early history but suffer from sudden niche shifts, aggressive automation, or inconsistent activity. These accounts are the hardest to evaluate and the most misunderstood.

Understanding these categories allows buyers to evaluate risk more precisely instead of applying blanket rules that do not reflect how account enforcement actually works on Twitter.

Profile-Level Red Flags That Signal Fake or Botted Accounts

The profile is the first layer of inspection, but most buyers only look at it superficially. Experienced evaluators read profiles as behavioral artifacts rather than branding assets.

Usernames that combine random letters, numbers, or repeated structures are often indicators of bulk creation. While some real users have messy handles, patterns across multiple listings from the same seller often reveal automation at the registration stage. Display names that do not match the bio language or content niche can signal recycled accounts that have changed purpose multiple times.

Profile bios are another overlooked signal. Fake or botted accounts often use vague motivational phrases, generic emojis, or keyword-stuffed descriptions that do not align with timeline activity. A bio that claims expertise while the timeline shows no relevant conversation history is a strong inconsistency marker.

Profile images matter as well, but not in the way most people think. Stock photos, AI-generated faces, or low-resolution images reused across multiple accounts are obvious red flags. More subtle is the mismatch between profile image age and account behavior. A five-year-old account with a brand-new profile image and sudden activity spike often indicates resale or repurposing.

Follower and Following Patterns That Reveal Automation

Follower metrics are one of the most abused selling points in the account marketplace. Numbers alone mean nothing without context. The relationship between followers, following behavior, and engagement quality tells a much more accurate story.

A common botted pattern is rapid following growth without proportional engagement. Accounts that follow thousands while receiving minimal replies or likes often rely on follow-unfollow automation loops. Another red flag is geographic inconsistency. If an account claims to be based in one region but its followers cluster heavily in unrelated countries known for click farms, caution is warranted.

The follower to following ratio should always be evaluated in combination with timeline behavior. A high follower count with no conversational engagement is often worse than a smaller but interactive audience. Experienced buyers also look for sudden spikes in follower growth followed by long plateaus, which often indicate purchased follower injections rather than organic growth.

This is not about chasing perfect ratios. It is about identifying patterns that suggest synthetic audience building rather than genuine network development.

Engagement Quality Signals That Expose Botted Activity

Engagement is where many fake or botted Twitter accounts quietly expose themselves. While likes and retweets are easy to automate, meaningful interaction is not.

One of the most common red flags is engagement without conversation. Accounts that receive likes or retweets but almost no replies, quote tweets, or profile clicks often rely on engagement pods or automated boosting. Another indicator is repetitive reply structures. If replies use similar phrasing, emojis, or sentence length across different posts, automation is likely involved.

Timing patterns also matter. Engagement that arrives in perfectly spaced intervals or immediately after posting can signal scripted behavior. Organic engagement tends to be messy, delayed, and inconsistent. Uniformity is rarely natural.

From an experience standpoint, professional buyers often manually scroll through engagement profiles, checking whether interacting users themselves look real. Networks of low-quality interacting accounts often reveal each other when examined collectively.

Timeline Behavior Analysis Beyond Posting Frequency

Many sellers advertise “active accounts” based solely on posting frequency. This is a shallow metric. What matters more is how content appears over time.

Fake or botted Twitter accounts often post at unnatural intervals. This includes perfectly timed posts, identical daily schedules, or activity that ignores weekends and holidays entirely. Content repetition is another major signal. Slightly reworded tweets on the same topic posted repeatedly often indicate template-based automation.

Contextual awareness is also important. Real users respond to trends, conversations, and events within their niche. Accounts that post generic content without referencing current discussions often lack genuine engagement intent.

A deeper behavioral signal is how accounts behave between posts. Do they like, reply, or interact organically, or do they only broadcast? Broadcast-only behavior is common among synthetic accounts and carries higher enforcement risk over time.

Account History and Creation Footprints Buyers Overlook

Account age is frequently misunderstood. Older does not always mean safer. What matters is activity density over time.

Accounts that remain dormant for long periods and then suddenly become active often trigger internal risk scoring. This is especially true when dormant accounts immediately engage in outbound actions like follows or DMs. Creation-era behavior also matters. Early tweets, early follows, and early interactions often reveal whether an account began as a real user or a synthetic asset.

Username changes, bio rewrites, and niche pivots leave footprints. While none of these alone are fatal, clusters of such changes increase risk. Experienced buyers look for continuity rather than perfection.

At this stage, buyers who understand E E A T principles rely less on individual signals and more on pattern convergence. One red flag is noise. Multiple aligned signals are confirmation.

Hidden Automation Footprints Most Sellers Try to Hide

Even when a Twitter account looks clean on the surface, automation footprints often remain buried in behavioral data. Sellers rarely disclose how an account was grown, warmed, or monetized before resale. This is where inexperienced buyers get trapped.

One of the most telling signals is action density versus account maturity. If an account has relatively few tweets but shows a large volume of follows, likes, or DMs historically, automation was almost certainly involved. Many automation tools prioritize silent actions that do not appear publicly but still contribute to internal risk scoring.

Another overlooked footprint is tool signature overlap. Accounts that were previously connected to mass automation tools often show identical behavioral curves when compared across listings. This includes similar follow pacing, engagement timing, and even sleep cycles. When multiple accounts from the same seller share these patterns, the risk compounds.

Advanced buyers also watch for infrastructure scars. Accounts that have been logged in from multiple IP regions, device types, or browser fingerprints in short timeframes are often part of bot farms. Even if credentials are “fresh”, internal logs remember this history.

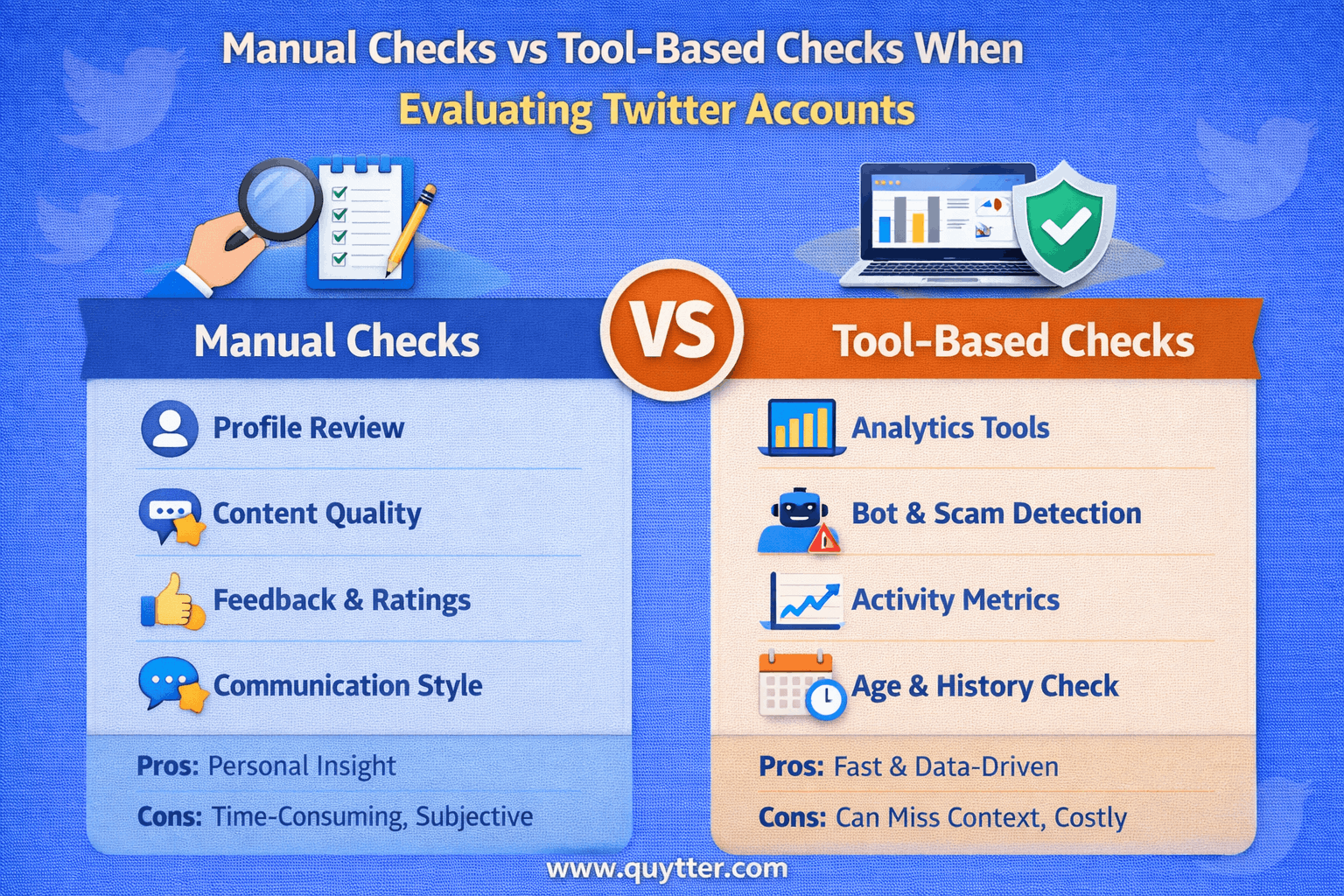

Manual Checks vs Tool-Based Checks When Evaluating Accounts

There is a misconception that tools can replace human judgment when evaluating Twitter accounts. In practice, tools and manual inspection serve different purposes and should never be used in isolation.

Manual checks excel at identifying narrative inconsistencies. These include mismatches between bio claims and timeline behavior, unnatural conversation tone, or engagement that feels staged. Human intuition is particularly strong at spotting recycled content and shallow persona building.

Tool-based checks, on the other hand, are valuable for surface-level validation. Metrics like follower authenticity estimates, engagement ratios, and growth curves help filter out obviously bad inventory. However, many tools rely on heuristic models that lag behind platform enforcement logic. Passing a tool score does not equal safety.

The most reliable buyers use a layered approach:

- First pass with tools to eliminate obvious junk

- Second pass with manual timeline and engagement review

- Final pass by mapping account behavior to intended use case

Accounts suitable for light branding may fail under automation stress. Accounts that survive automation may be unsuitable for public-facing brands. Context matters more than raw scores.

Seller-Level Red Flags Buyers Ignore Too Often

Many buyers focus exclusively on the account and ignore the seller. This is a costly mistake. Seller behavior often predicts account quality more accurately than metrics.

Sellers who refuse to provide creation method transparency usually have something to hide. Legitimate providers can explain whether accounts were phone verified, email aged, or organically grown without revealing trade secrets. Vague answers are red flags.

Another signal is inventory uniformity. Large batches of accounts with nearly identical ages, follower counts, or activity levels often indicate bulk automation. Natural inventories show variation.

Refund policies are also revealing. Sellers who offer replacements only for “instant bans” but not shadow limitations are offloading long-term risk onto buyers. Experienced buyers negotiate based on performance windows, not login success.

Trust in this market is built through repeatability, not promises.

Use-Case Alignment: The Most Overlooked Safety Layer

One of the biggest reasons accounts fail after purchase is misaligned usage. An account that survives passive posting may collapse under aggressive outreach. An account built for engagement pods may fail when switched to automation bots.

Before buying, buyers should map:

- Intended action types (posting, following, DMing)

- Expected daily volume

- Niche sensitivity

- Brand exposure level

Accounts should be evaluated not on absolute quality, but on fitness for purpose. This mindset dramatically reduces suspension rates and wasted spend.

How Quytter Helps Buyers Avoid Fake or Botted Twitter Accounts?

This is where most buyers hit a wall. Even with knowledge, manual vetting at scale is time-consuming and error-prone. Quytter exists to solve this exact gap between theory and execution.

Instead of selling random inventory, Quytter focuses on use-case matched Twitter accounts. Each account is evaluated based on behavioral history, risk exposure, and suitability for specific workflows such as automation, marketing, or network building.

What makes Quytter different is not just sourcing, but pre-purchase risk filtering. Accounts are screened for:

- Historical automation footprints

- Engagement authenticity

- Behavioral consistency

- Infrastructure contamination risk

Buyers are not just purchasing accounts. They are reducing downstream costs associated with bans, shadow limits, and network resets. For teams running automation or scaling outreach, this difference compounds quickly.

Conclusion

Spotting fake or botted Twitter accounts before buying is not about paranoia. It is about pattern recognition. Fake metrics, recycled accounts, and hidden automation footprints all leave traces for those who know where to look.

Buyers who rely on surface signals pay the highest price. Those who evaluate behavior, seller integrity, and use-case alignment build sustainable systems instead of disposable assets. Whether you are running campaigns, automation, or network growth, the goal is the same: reduce uncertainty before money changes hands.

When account quality becomes a strategic decision rather than a gamble, long-term performance follows naturally.